Okay, so that hasn’t happened yet, but the folks over at Narrative Science, a company that has created software that performs “automated narrative generation,” confidently predicts that its computer program will win the coveted prize by 2016.

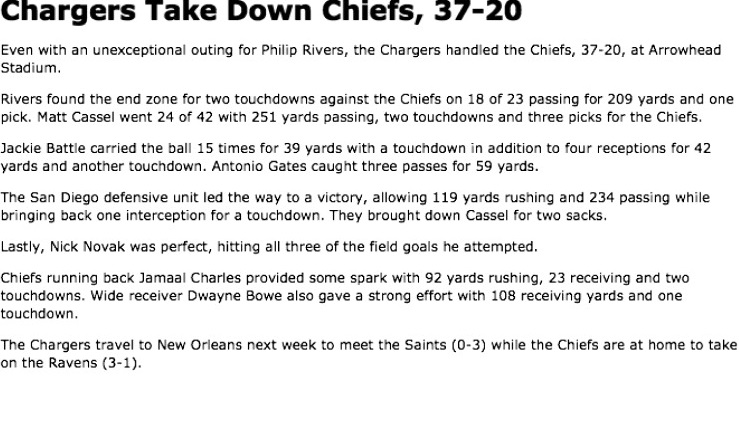

Let’s back up a second, you might be thinking. As far as we know, there’s no such thing as the “homeostatic newspaper” that autonomously gathers and publishes its own news, as described by Philip K. Dick in his short story “If There Were No Benny Cemoli.” And this is, after all, the real world, not some science fictional one in which robots do everything. But that distinction grows smaller and smaller by the day. In fact, computer programs have been writing short news briefs for a few years now. One of the first examples was a summary of a football game between the University of Nevada Las Vegas and the University of Wisconsin. A mere minute after the third quarter ended, the following post appeared online:

Wisconsin appears to be in the driver’s seat en route to a win, as it leads 51-10 after the third quarter.

Wisconsin added to its lead when Russell Wilson found Jacob Pedersen for an eight-yard touchdown to make the score 44-3. The Badgers started the drive at UNLV’s 28-yard line thanks to a Jared Abbrederis punt return.

A one-yard touchdown run by Montee Ball capped off a two-play, 42-yard drive and extended Wisconsin’s lead to 51-3. The drive took 42 seconds. The key play on the drive was a 41-yard pass from Wilson to Bradie Ewing. A punt return gave the Badgers good starting field position at UNLV’s 42-yard line.

A 69-yard drive that ended when Caleb Herring found Phillip Payne from six yards out helped UNLV narrow the deficit to 51-10. The Rebels threw just three passes on the drive.

UNLV will start the fourth quarter with the ball at the 41-yard line.

That might not seem so remarkable, but remember, it was posted a minute after the third period ended. Either a human writer was drafting that during the first half of the game, or something else was. The Big Ten Network has been using Narrative Science since 2010, boosting its web traffic by an estimated 40% for football and basketball score reports. Information like sports scores or crime statistics are perfect material for robo-journalists because little analysis is required and the numbers pretty much tell the story. Narrative Science’s software program can convert the quantitative data into language, but not just any language. It delivers grammatically and structurally sound sentences and paragraphs, and a 500-word article only costs about $10 to generate.

Narrative Science uses a three part article-generation system. First, it gathers data to “create an appropriate narrative structure to meet the goals of your audience.” Then it uses a program called Quill to harvest and organize the most important facts into a story. Lastly, it increases the piece’s sophistication by “answer[ing] important questions, provid[ing] advice, and deliver[ing] powerful insight in a precise, clear narrative.” And presto, you’ve got an article.

Narrative Science isn’t the only company creating story-writing programs. Last March, within three after an earthquake shook the Los Angeles area, the L.A. Times had an article about it on its website. That article was written by Quakebot, an algorithm developed by L.A. Times journalist and programmer Ken Schwencke. The earthquake shook him awake at 6:25 am, and by the time he got to his computer, a story about the quake was already waiting for him–all he had to do was click “publish.” Schwencke programmed Quakebot to pull data from U.S. Geological Survey alerts, which it then used to fill in a template. This is what the Quakebot wrote:

A shallow magnitude 4.7 earthquake was reported Monday morning five miles from Westwood, California, according to the U.S. Geological Survey. The temblor occurred at 6:25 a.m. Pacific time at a depth of 5.0 miles.

According to the USGS, the epicenter was six miles from Beverly Hills, California, seven miles from Universal City, California, seven miles from Santa Monica, California and 348 miles from Sacramento, California. In the past ten days, there have been no earthquakes magnitude 3.0 and greater centered nearby.

This information comes from the USGS Earthquake Notification Service and this post was created by an algorithm written by the author.

Sure, it’s just the basics, but within six hours, human reporters developed it into a longer, front-page story. The L.A. Times has a similar bot that churns out informational posts whenever there’s a homicide. Schwencke isn’t threatened by programs such as Quakebot, but instead argues that they help journalists by getting the basic information out there quickly so journalists can start in on the digging for details and insights.

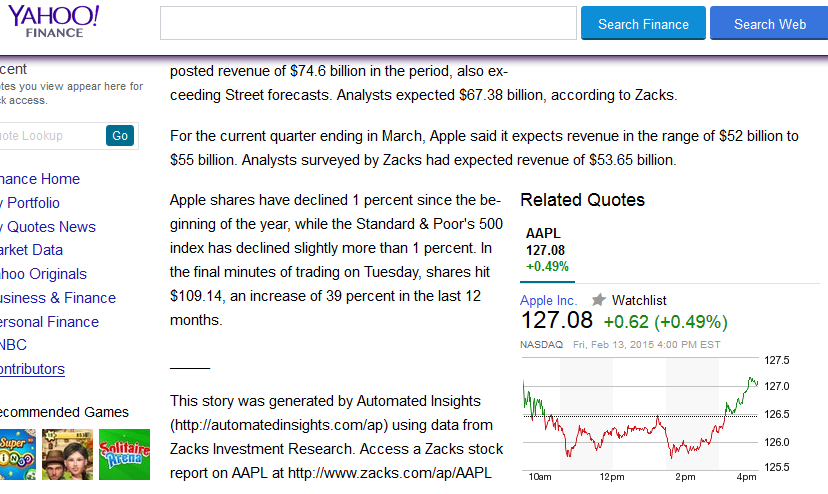

The Associated Press has also been using robot journalists for months. Last summer, the AP established a partnership with Automated Insights, a company that uses its own platform called Wordsmith to “automatically turn financial data into stories.” Yahoo, Comcast, and Allstate also use Automated Insights to publish millions of articles per week—the Wordsmith system can apparently crank out a staggering 2,000 articles per second. If you’re curious about what those articles look like, check out this Automated Insights-generated article on Apple’s first-quarter earnings. Most of the time, the only way to know who or what wrote the piece is by the one-sentence disclaimer at the bottom. Just before his death, N.Y. Times journalist David Carr Tweeted about a computer-generated article about the paper’s earnings:

I followed a link and read an earnings story about the NYT … written by a robot. http://t.co/zgWXMVxYRf Gee.

— david carr (@carr2n) February 3, 2015

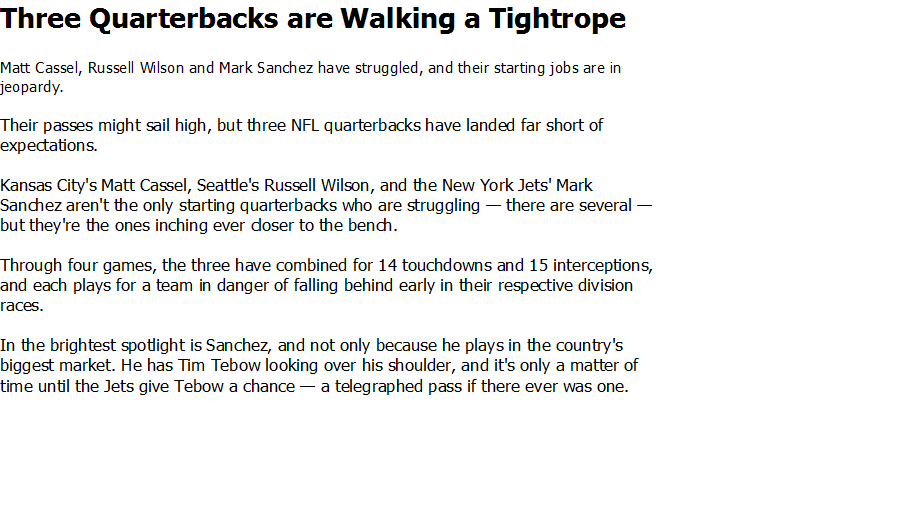

While a lot of the computer-generated articles seem fairly rote and devoid of personality, it may only be a matter of time until, in the immortal words of South Park, robot journalists take our jobs. If you think those concerns are premature, check out the two samples below, as published in a special issue of Journalism Practice about the future of journalism. Which do you think was written by a human and which was written by a computer?

The test revealed that the “software-generated content … [was] perceived as… descriptive, boring and objective, but not necessarily discernible from content written by journalists.” Respondents generally found the computer-written article more informative and trustworthy, but less readable than the human-written one. “Perhaps the most interesting result in the study is that there are [almost] no … significant differences in how the two texts are perceived by the respondents,” study author Christer Clerwall wrote. ?The lack of difference may be seen as an indicator that the software is doing a good job, or it may indicate that the journalist is doing a poor job – or perhaps both are doing a good (or poor) job?”

In case you were wondering, the second example was written by the human.

I suppose it’s only fitting that robots start writing the news, seeing as how robots are now also delivering it.

After a few days, robo-journalist will write content, news and editing these. Then, pulitzer journalist will fall down in every writing sector.